I’ve started designing custom film cameras, and needed tools to understand what kind of tolerances and imperfections can I get away with when guessing focusing distances. And more importantly, what kind of image would a lens create, and how the field of view, depth of field, focal length and aperture would correspond across different film formats. I haven’t really found good tools online, so I created some myself. Hope they can help someone:

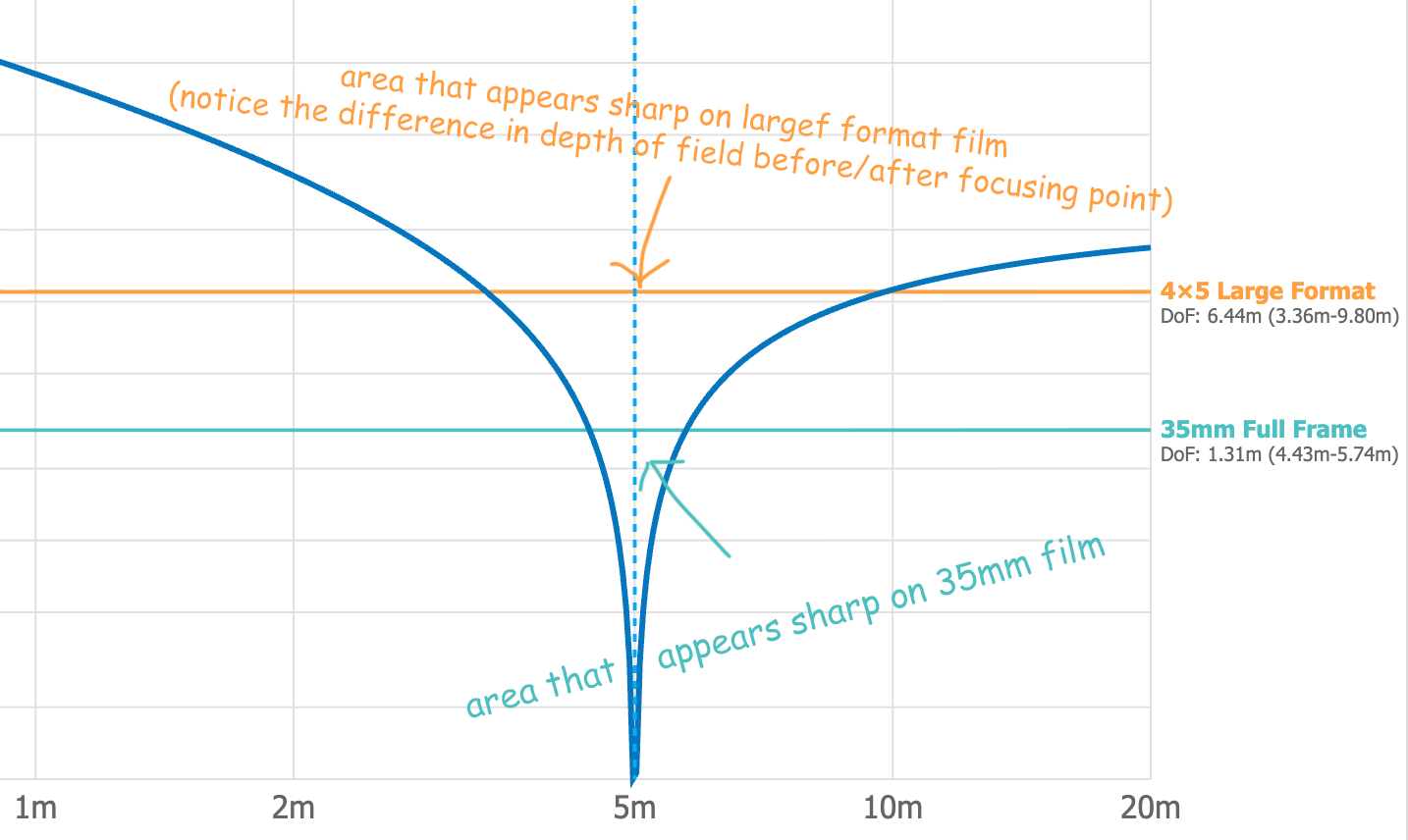

Circle of Confusion Calculator

This one shows what would be in focus and how front/back blur behaves across formats. It was really eye-opening for me to play with it and understand the relationships between the different factors and how they shape the image. The Circle of Confusion tells us what a point light source turns into after going through the lens. It is basically the brush with which you paint light. And at different film formats (at similar image viewing distances), similar sized CoC might appear to paint a sharp or a blurry picture. Play with the tool to find out more!

Background Blur Accumulation Calculator

This one shows background blur accumulation at different format and apertures, to see what lens you would need in e.g. 35mm to simulate a LF look. In this case specifically an abstract measure of “how much blur will there be for 2 meters behind the focus point”. It was created specifically for environmental portraits and uses vertical angle of view (instead of, as often used, the diagonal) to get a better feeling of background separation in portraits. It tries to help you with the questions of “Where should I place the person?” and What lens and what aperture should I use for better background separation?"

Helicoid Extension Calculator

This one shows your helicoid/focusing rail extension across focal lengths and focus distances.

Distance Scale Creator

This one is for creating a distance scale and printing it out at home.

All of them are interactive, so play around and see how it all relates. Let me know if somethings is not working as expected!

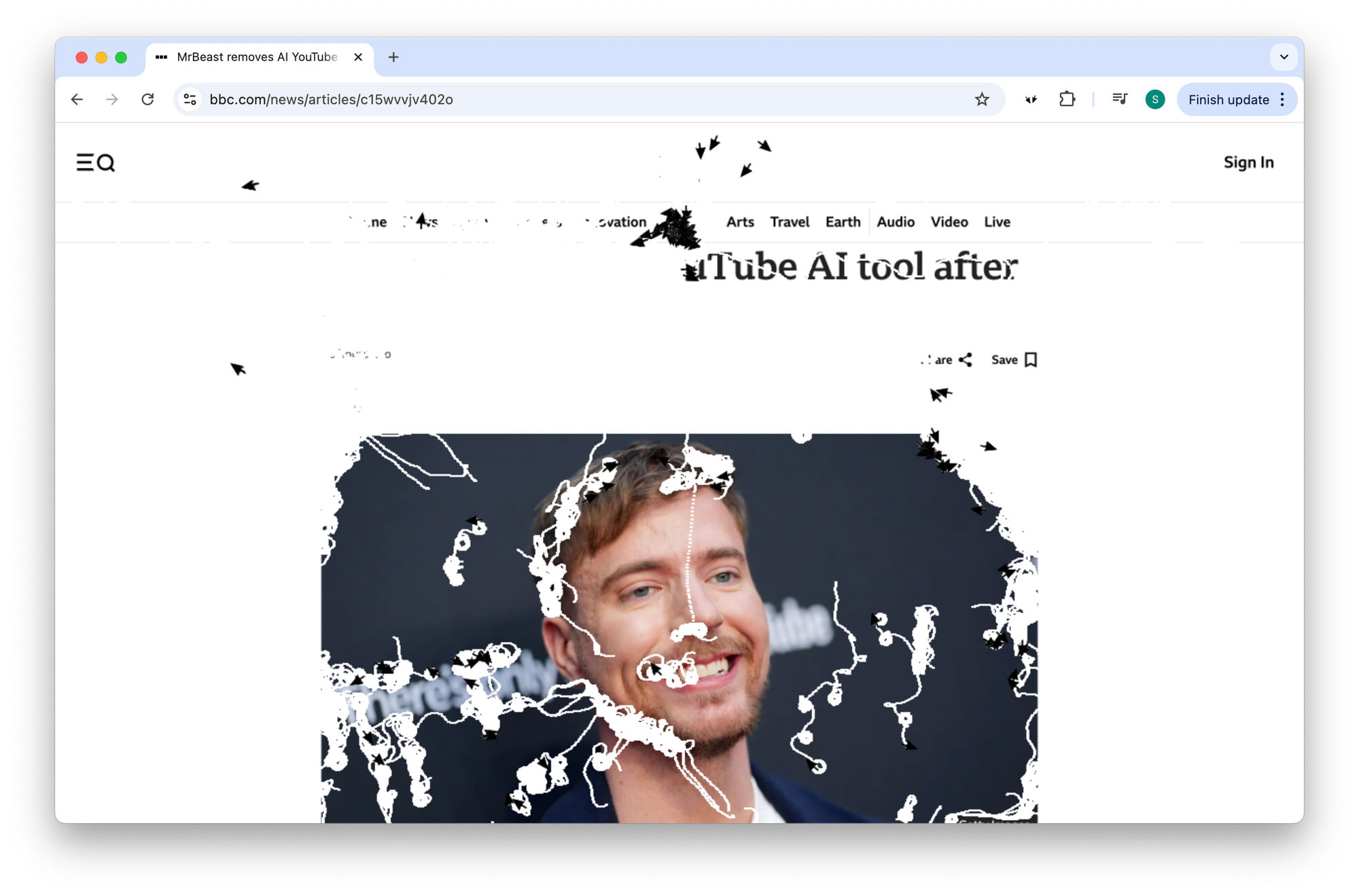

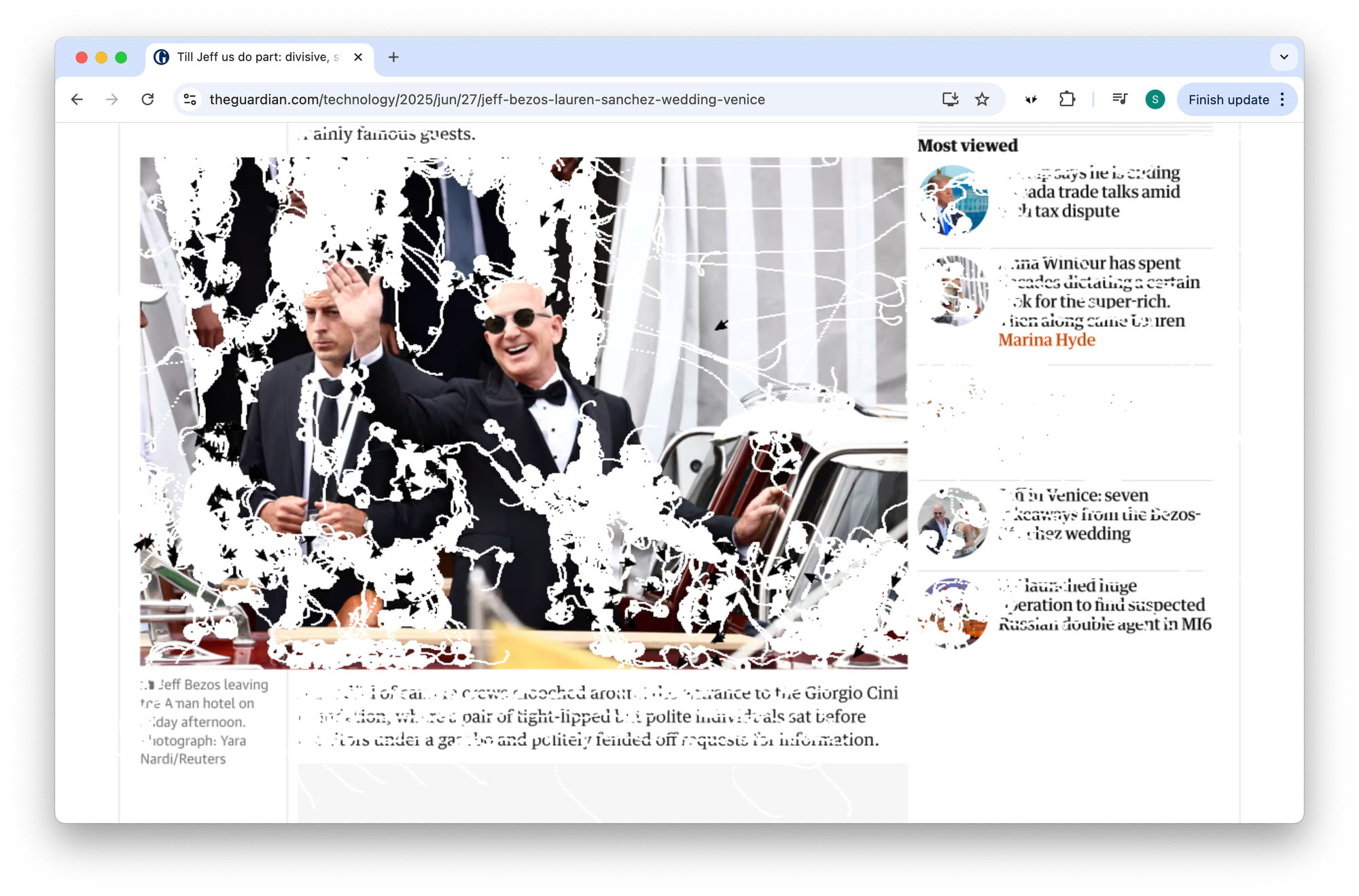

I really hate it when I’m exposed to some horrible news online, and feel powerless. There is nothing I can do, I am not responsible, and yet at the same time I have this feeling “this shouldn’t be happening”.

There is a temptation to immediately react – if it was printed, I would crumple up the paper and throw it away – yet, reading pixels on the screen I don’t have the right gesture to express my discontent.

So I made this little browser extension. Click on a button, and a horde of cursors, like hungry piranhas, will slowly rip the web page apart for you.

Sounds fun? Install on Firefox or Chrome.

This image is taken with a (60 year old) Bronica C medium format camera, with a (120 year old) Petzval-type lens.

This camera is not only beautiful in a vintage car sort of way, but is also uniquely suited for adapting old lenses on medium format. On a typical camera, the lens houses the helicoid, the mechanical corkscrew-like part that moves the optics closer and further away from the subject allowing you to focus properly. On the Bronica, the helicoid sits between the body and the lens, and so you can adapt all kinds of weird lenses onto it. This particular lens probably stems from the late 19th, early 20th century. It has no markings on the body, but as I disassebled it to clean the glass, I found the inscription “A. Laverne & Co. Paris” on the rim of a glass. There were quite a few small optics manufacturers in the 19th century - the startups of the era.

The Petzval lens type was the first portrait lens design, created in 1840. It has with two elements at front and two at the back, and has a strong sharpness drop towards the corners. No, wait, it’s more accurate to say that only what’s in the center can ever have a high enough resolution. (Unless of course, you move the lens off-center, like you can with a large format camera). But sometimes, this lack of sharpness and the disquiet character of the rendering is what we are after.

Shoutout to Chris and Lina who were so patient with me on this August afternoon!

Sometimes I get tired of the usual composition, of the trained way of seeing. Lately I tried to make photographs that not only depict nothing in particular, but also guide the attention of the viewer around, showing nothing.

The colors guide you in one direction, the focus in another one, and the composition a third. Its only value lies in the movement of the viewers eyes, in the choreography of the gaze.